Capability Jumps & Specialist Models

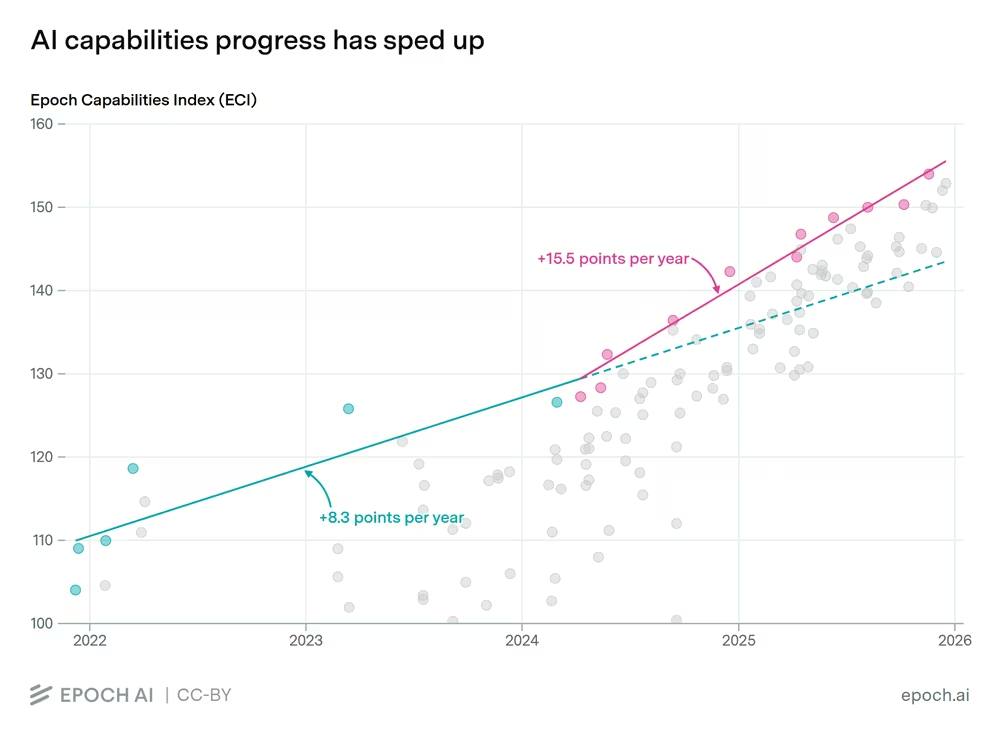

The key argument here is not about when AGI arrives; that is a red herring. Current models are improving rapidly, and 2026 may bring a significant leap in reasoning capability.

The background to this. When DeepSeek released the R1 paper, it contained a buried observation. When you did not force these models to ‘reason’ or ‘show their working’ in a human-readable way, they made up their own language, metaphorically speaking. This video explains it well, and still got to the result. In fact, they got there more efficiently.

Forcing the models to reason in a human-readable way is for safety and governance. But if you just apply safety and governance to the outcome, could the models perform better?OpenAI thinks so, and I am pretty sure others are testing it too.

let’s anthropomorphise this for a second (always a dangerous thing to do), it kind of makes sense. I do not believe we think in a language. Thinking just happens, there is way more going on we cannot ‘see’; the language part is, in my view, some sort of interface through which we comprehend thoughts. So if I am right in that postulation, LLMs could be ‘freed’ from an artificial restriction.

Why should we care?

Well, at the moment, generative AI and diffusion models are incredibly computationally expensive. So, anything that reduces the ‘computational cost’ of the outcome by being more efficient in the reasoning part is a good thing, in terms of the energy and computing this technology requires. The energy demands alone are forcing companies like Google to dial down carbon neutrality aspirations from definite targets to moonshots.

The second reason is that it could make LLMs more consistent and reliable. Over the last 18 months, I have found them good in capability, but their unpredictable and almost random behaviour at times limits their usefulness.

You can see the full data for this graph at Epoch.AI.

We have all had a GPT moment where we go, “That is so obviously wrong,” and it goes, “You are so right, I apologise,” etc., etc. EvenIlya Sutskever calls this out. And you end up in a loop that wastes your time and, rightly, causes you to distrust the tech.

Your prompt, context combo consistently gets you the result you want and then just as you relax - you get an Aardvark.

Are the ‘evals’ all wrong?

Here is a notion, the evals for the models are steeped in math and science. What that is not helpful for is evaluating their capability in the messy reality of how work actually gets done. Like, arguing with a client about why you won't change the site's tone of voice just because they have paid you £10,000.

The evals seem focused on solving PhD-level physics problems or other predetermined problems. Even then these things get it completely wrong when they collide with the real world as in this video.

Those evals are super useful when you are looking to solve very complex, remarkable and Nobel Prize-worthy problems like protein folding, as AlphaFold did. And the fact that humans with AI did that gives us a glimpse of the humanly useful things AI can do not in an autonomous state but working with humans.

They are also useful to a point in driving the narrative for companies who are building them to show how smart they are.

However, the real world is beautifully chaotic and messy. We as humans can deal with that; LLMs cannot. I will give you an example from my work to illustrate this.

I was working on my fully automated process for creating YouTube thumbnails. I made a mistake: I forgot my naming conventions, and instead of gaze-direction-mood, I named one source image mood_direction_composition_gaze.

Here is what happened: the system chose this new image with the longer, perhaps more complete context in the naming virtually all the time. The system broke; a human would have spotted this and either proactively called it out or fixed the problem.

It means you need very careful construction in any 'system of work' involving LLMs. In fact, I believe this is why human value at work is safe from these LLM centric systems for some time yet.

It also raises an uncomfortable question: if you need to put in this much effort into getting to that point of ‘real world’ reliability, you may well be better off with a person to do the work. That, however, is the subject of another article.

Capability From Specialist Models

A second driver of the capability jump is the emergence of specialist models that excel at well-defined, deterministic tasks.

Take, for example, programming in JavaScript, Python, or SQL; there is only one way to write the code, but even here, there are ‘dialects’. As an example, BigQuery SQL structures INSERT/MERGE INTO queries differently than standard SQL. Also, systems like your CMS, which may use its own JavaScript libraries, will need specialist knowledge for reliability.

Away from programming into the real world. Specialist, fit-for-purpose, AI systems like AutoPod will save you a ton of time editing a multi-camera shoot, for a podcast perhaps. More importantly, these specialists will plug into the tools your talented team already uses. So you can continue to use CapCut, Adobe Premiere Pro, or DaVinci Resolve, and not have to go through the re-tooling pain.

On a, perhaps, more tangible note, we are already using specialist models, such as Veo3, Sora (now retired), Kling, and more. These diffusion models for image, video, and even audio synthesis are proof that specialisation and capability leaps occur precisely because they are not trying to be general in the context of the outcomes they generate.

These specialist models will also become very useful in the quest to democratise access to data, for example. Two years ago, I would not have let a marketer anywhere near BigQuery, for example; they would have had to request analysis or mini data pulls.

But now with a specialist BigQuery LLM - they can talk to data, and get actionable insights much more quickly all with minimal tooling. In fact, just as I was putting together final edits, Google released a specialist BigQuery Gemini model which will further help you to talk to data.Bottom line, you will see specialists pop up everywhere.

Example: The Human-Computer Interaction Changes

Another manifestation of LLMs and their spin off capabilities is how we engage with machines. Enabling a solution like Wispr Flow, which is finally removing the friction that existing Human-Computer Interfaces (HCI) impose on getting things done.

Up until now, dictation and voice control solutions were as pleasant an experience as going to the dentist, as anyone who used Dragon voice-to-text in the 90s will know.In fact, one of the AI specialist models is voice-to-text. The spin off from the AI arms race is that we now have very cheap access to long voice-to-text, something we did not have in 2023.

We can now in a ‘system of work’ use voice reliably. Voice inputs recorded over time were used, for example, to synthesise the first draft of this article. Where many voice notes and observations were recorded walking around my kitchen and collated with the help of AI.

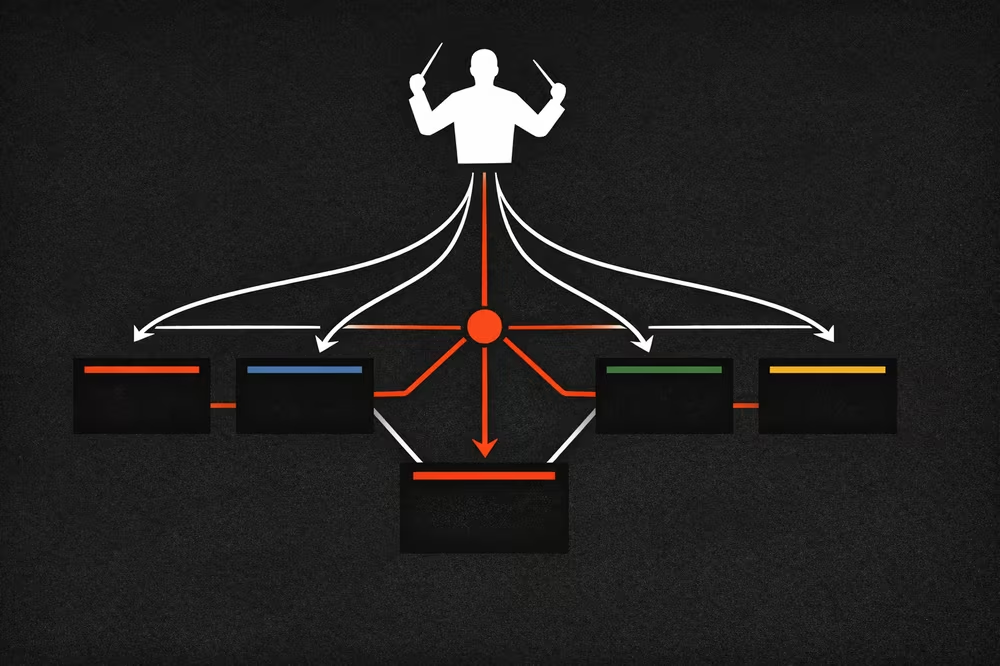

Capability Through Orchestration of Specialist Models

The third capability driver is orchestration. The orchestration of specialist models is one of the most interesting directions for realising productivity gains and enabling symbiotic human/AI work in SMEs. The specialisation drive does not stop at the models themselves but extends to the platforms we use as a result.

Platform providers are building out their agentic interfaces - basically making them AI-ready - using the Model Context Protocol. This produces even more useful outcomes as my own case study below shows.

Composing AI enabled Systems of Work

Composing what I call these AI-enabled systems of work is very different to buying a platform that supposedly helps you create things with AI. Only by composing a system of work that fits your intent, your business values, and the product you're creating will you get the maximum benefit.

And the ability to join up and create these systems of work is significantly easier today than it was even two years ago, with the maturing of protocols like the Model Context Protocol (MCP).

Think of it as one of the emerging fundamental enablers, just as APIs and JSON were integral to the manifestation of the modern digital world in enabling cloud based platforms to communicate.

Over the last 18 months experimenting to build the foundations of this site Digitising Events has shown me that enterprises will only start to see performance gains by exploding our current work systems [link to my article] and reconstructing them with AI woven into the fabric. Not as a human replacement but as an enabler.

By thinking of the adoption of AI in this way and not just focusing on chatbots and their utility, there is a lot more we can get out of this technology beyond videos of cats on the moon, or indeed, gleeful announcements of job cuts. The accessibility of AI will fundamentally change our systems of work.